If you view the two response variables as two independent ratings of the n parts, the Kappa coefficient equals +1 when there is complete agreement of the raters. When the observed agreement exceeds chance agreement, the Kappa coefficient is positive, and its magnitude reflects the strength of agreement. Although unusual in practice, Kappa is negative when the observed agreement is less than the chance agreement. The minimum value of Kappa is between -1 and 0, depending on the marginal proportions.

Categorical Kappa statistics (Fleiss 1981) are found in the Agreement Across Categories report.

|

•

|

n = number of parts (grouping variables)

|

|

•

|

m = number of raters

|

|

•

|

k = number of levels

|

|

•

|

|

•

|

Ni = m x ri. Number of ratings on part i (i = 1, 2,...,n). This includes responses for all raters, and repeat ratings on a part. For example, if part i is measured 3 times by each of 2 raters, then Ni is 3 x 2 = 6.

|

|

•

|

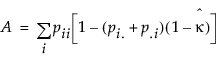

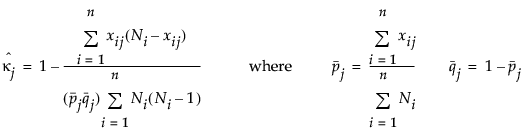

xij = number of ratings on part i (i = 1, 2,...,n) into level j (j=1, 2,...,k)The individual category Kappa is as follows:

|

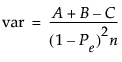

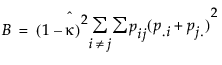

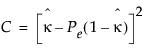

and

and  are as follows:

are as follows:

and

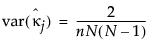

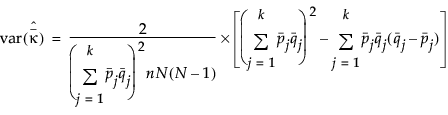

and  are shown only when there are an equal number of ratings per part (for example, N

are shown only when there are an equal number of ratings per part (for example, N