JMP fits linear models to three different kinds of responses: continuous, nominal, and ordinal. The models and methods available in JMP are practical, are widely used, and suit the need for a general approach in a statistical software tool. As with all statistical software, you are responsible for learning the assumptions of the models you choose to use, and the consequences if the assumptions are not met. For more information see The Usual Assumptions in this chapter.

Y = (some function of the X’s and parameters) + error

The Fitting principle is called least squares. The least squares method estimates the parameters in the model to minimize the sum of squared errors. The errors in the fitted model, called residuals, are the difference between the actual value of each observation and the value predicted by the fitted model.

The simplest model for continuous measurement fits just one value to predict all the response values. This value is the estimate of the mean. The mean is just the arithmetic average of the response values. All other models are compared to this base model.

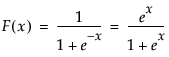

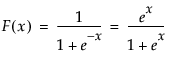

where F(x) is the cumulative distribution function of the standard logistic distribution

For r response levels, JMP fits the probabilities that the response is one of r different response levels given by the data values. The probability estimates must all be positive. For a given configuration of X’s, the probability estimates must sum to 1 over the response levels. The function that JMP uses to predict probabilities is a composition of a linear model and a multi-response logistic function. This is sometimes called a log-linear model because the logs of ratios of probabilities are linear models. JMP relates each response probability to the rth probability and fit a separate set of design parameters to these r - 1 models.

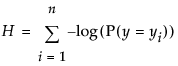

The fitting principle is called maximum likelihood. It estimates the parameters such that the joint probability for all the responses given by the data is the greatest obtainable by the model. Rather than reporting the joint probability (likelihood) directly, it is more manageable to report the total of the negative logs of the likelihood.

The uncertainty (–log-likelihood) is the sum of the negative logs of the probabilities attributed by the model to the responses that actually occurred in the sample data. For a sample of size n, it is often denoted as H and written

The nominal model can take a lot of time and memory to fit, especially if there are many response levels. JMP tracks the progress of its calculations with an iteration history, which shows the –log-likelihood values becoming smaller as they converge to the estimates.

The simplest model for a nominal response is a set of constant response probabilities fitted as the occurrence rates for each response level across the whole data table. In other words, the probability that y is response level j is estimated by dividing the total sample count n into the total of each response level nj, and is written

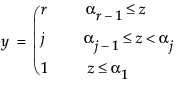

With an ordinal response (Y), as with nominal responses, JMP fits probabilities that the response is one of r different response levels given by the data.

where r response levels are present and F(x) is the standard logistic cumulative distribution function

Another way to write this is in terms of an unobserved continuous variable, z, that causes the ordinal response to change as it crosses various thresholds

where z is an unobservable function of the linear model and error

and ε has the logistic distribution.